All About MCP Resource

Skugan is a seasoned technology leader with over 15 years of progressive experience in the software and cloud industry. He possesses deep expertise across Technical Account Management, Program Management, IT Consulting, Pre-Sales, and complex Project Delivery. Skugan has consistently played a pivotal leadership role in large-scale customer transformation programs, delivering successful outcomes through strong stakeholder collaboration, strategic decision-making, and disciplined execution. Notable achievements include leading the Customer Environment Replication for SAP BOBJ, ECC Validation Environment setup, and currently driving a strategic SAP Cloud for Customer (C4C) migration to AWS, initiatives that have earned him multiple recognitions and awards. Customer-centric by approach, Skugan has guided numerous enterprises in achieving their digital transformation goals, enabling seamless transitions from legacy systems to modern cloud-native architectures. His technical proficiency spans a wide range of platforms and solutions, including:

Cloud: AWS (10 X certified), Microsoft Azure (certified) SAP Portfolio: SAP BusinessObjects BI, SAP Data Intelligence, SAP HANA, SAP Cloud for Customer (C4C), SAP Customer Data Cloud, SAP Commerce Cloud, SAP C4C V1, SAP Sales and Service Cloud V2, SAP Sales Cloud V2 Integration Support with S4HANA AI: GenAI, Agentic AI, Azure OpenAI, RAG Based Frameworks, Langchain, Langraph, LlamaIndex etc.,

Skugan is recognized for his ability to manage complex, high-stakes programs with exceptional planning, prioritization, delegation, and people-management skills. He has trained and mentored more than 180 colleagues on AWS and Azure technologies and has designed and delivered numerous global workshops on cloud adoption, SAP on hyperscalers, and modern data & AI architectures. His blend of technical depth, customer focus, and proven program leadership makes him a trusted advisor and transformation partner for enterprises embarking on cloud, data, and AI journeys

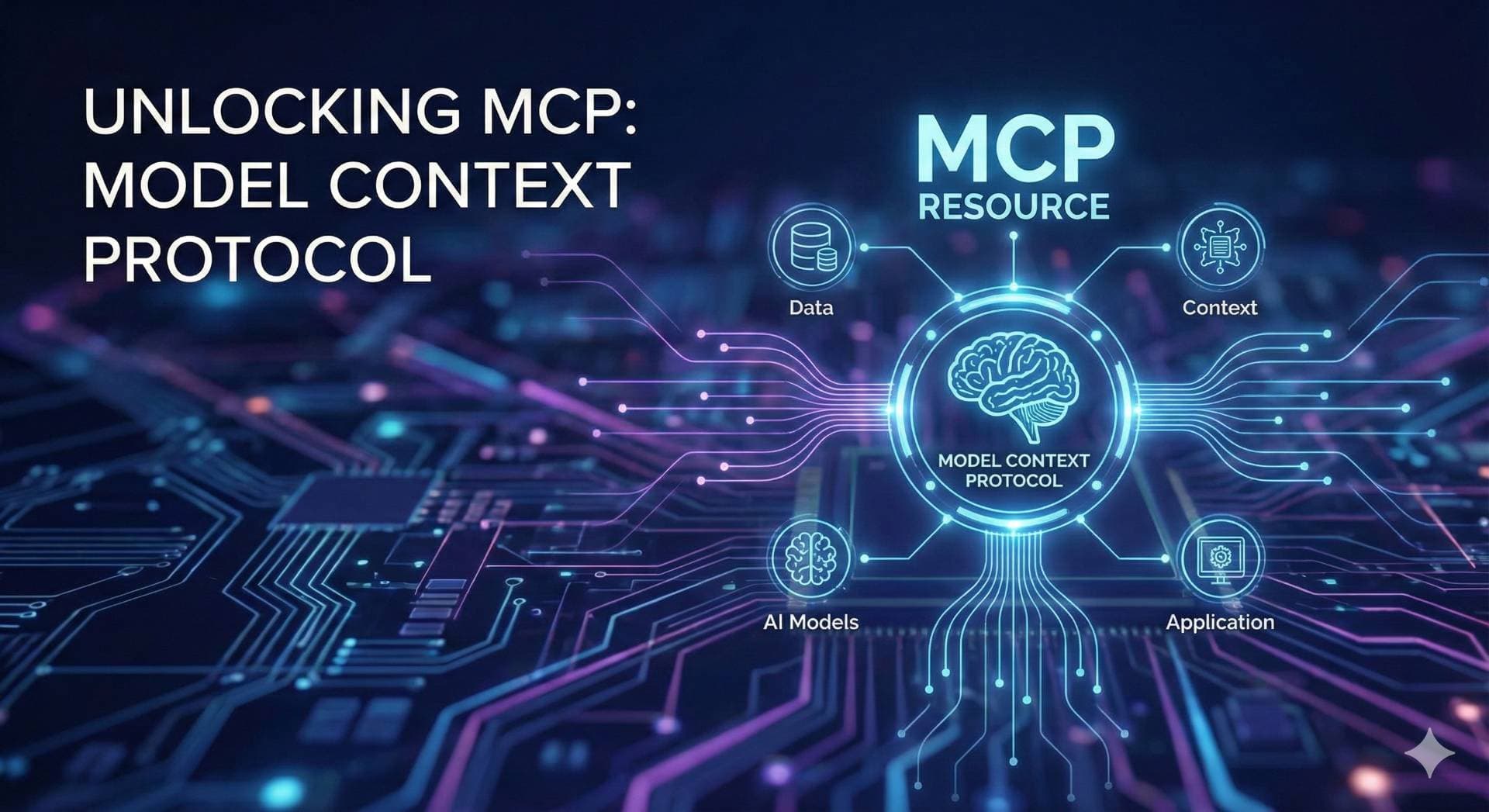

What is a MCP Resource? How it is related to MCP Tooling?

An MCP Resource is a read-only, addressable content entity exposed by the MCP server. Resources provide structured, contextual data that MCP clients can retrieve and deliver to LLMs for reasoning or enhanced understanding. They typically include items like logs, configuration data, files, real-time statistics, or any other data that can be represented as text, JSON, or binary blobs (e.g., PDFs, images).

Key points about MCP Resources:

They are read-only and deterministic, meaning no side effects or changes occur when accessed.

Resources are identified via unique URIs (e.g., note://, config://, [file://](file://)).

Access is done via standard MCP requests like resources/list to discover and resources/read to fetch content.

They allow LLMs to have contextual information without executing commands or changing state.

How Resources relate to Tools:

Tools are actionable and executable commands exposed by MCP servers. They perform operations such as calculations, database writes, or API calls that can change state or provide dynamic outputs.

Resources are passive data sources that provide context to the LLM but do not execute or trigger actions.

Typically, an MCP server exposes both resources (context/data) and tools (actions/operations).

Resources can be used by tools to provide necessary context or input data for execution.

From the LLM’s perspective, tools enable it to do things while resources help it know things.

Example:

A resource could be a log file or pricing data accessible to the LLM's context.

A tool could be a function calculating the current price or executing a trade.

Together, resources and tools empower LLMs with both rich context and actionable capabilities via the MCP protocol.

This clear distinction lets the MCP Server expose data and functions cleanly and predictably, letting clients and LLMs consume context and invoke actions seamlessly

What MCP Resources Offer

In the context of MCP and FastMCP, resources are a core primitive that provides read-only access to data or content. Unlike tools (which are invocable functions for performing actions, like API calls or computations), resources expose static or dynamically generated data that clients can directly read and use as context in conversations or reasoning. This could include configurations, lists, files, database queries, or any structured information.

Resources help by:

Providing scoped, persistent context to reduce the need for repeated tool calls or large prompts.

Allowing efficient data access without the overhead of function invocation (e.g., no need for the model to "decide" to call something).

Supporting metadata like descriptions, tags, and annotations for better discoverability.

Enabling dynamic generation (e.g., fetching fresh data on request) while remaining read-only.

Reducing token usage and latency in LLM interactions by injecting data directly into the context.

They are particularly useful in scenarios like your code, where tools handle actions (e.g., getting specific prices), but resources can offer supplementary data (e.g., a list of available symbols) to guide or inform those actions.

Communication Flow for Resources

The flow involves a client-server interaction over the MCP protocol:

Client Request: An MCP client (e.g., an LLM application) sends a resources/read request to the server, specifying the resource's unique URI (e.g., "data://symbols"). This can happen automatically if the LLM references the URI in its prompt or reasoning, or explicitly via the client's API.

Server Processing:

The server (your FastMCP instance) matches the URI to a registered resource.

If the resource is defined as a function (dynamic), it executes the function lazily (only on request). Parameters can be passed if the URI uses templates (e.g., "data://symbols/{category}").

The function's return value is converted to MCP-compatible content: strings become text/plain, dicts/lists become application/json (auto-serialized), bytes become base64-encoded blobs.

Metadata (e.g., name, description, mime_type) is included in the response for client use.

Server Response: The server returns the content directly to the client. If the resource list changes (e.g., you add/enable/disable one during runtime), the server may send a notifications/resources/list_changed notification to active clients.

Client Usage: The client (e.g., LLM) receives the data and incorporates it into its context. For example, an LLM could read a resource to get a list of valid symbols before calling your get_price tool, improving accuracy without extra steps.

This flow is stateless and read-only by default (via annotations like readOnlyHint: True), ensuring safety. Errors (e.g., from API failures) are handled by raising exceptions, which translate to MCP error responses.

Pros of Using Resources vs. Without Resources

With Resources:

Efficiency: Direct data access avoids the multi-step process of tool calls (e.g., model decides to call, invokes, waits for result). This reduces latency, token costs, and context overload.

Better Context Management: Resources can be referenced in prompts or auto-injected, providing structured data (e.g., JSON lists) that helps the model reason more effectively without hallucinating.

Flexibility: Supports dynamic data, templates for parameterization, async execution, and context access (e.g., via ctx: Context parameter for request-specific info).

Scalability: Ideal for read-heavy scenarios, like exposing configs or lists that don't change often. Notifications keep clients updated on changes.

Pros in Your Code Context: Complements your tools by providing upfront data (e.g., valid symbols), reducing invalid tool calls (e.g., bad symbols).

Without Resources (Relying Only on Tools):

Pros: Simpler if all interactions are action-oriented—everything is a callable function, so no need to distinguish read vs. write. Tools can handle both data retrieval and mutations in one paradigm.

Cons: Overkill for passive data access; each request becomes a full tool invocation, increasing steps, potential errors, and costs. For example, fetching a static list via a tool requires the model to explicitly call it every time, bloating prompts. In your code, without resources, users might misuse tools (e.g., call get_price with invalid symbols), leading to more failures.

In summary, resources shine for "give me data" scenarios, while tools are for "do something." Using both (as in MCP best practices) creates a balanced server.

We can take the example of the following code snippet – The following is an example for MCP Resource Decorator

“Confusion – Can we use Tools directly instead of resources – More detailed information provided below"?

We know that we can use tools to perform the required actions and also considering the above example, we can get the trading symbols via direct tool calling the api.binance.com/api/v3/exchangeInfo?

Why Use an MCP Resource Instead of Directly Calling the MCP Tools?

Yes, you could expose get_available_symbols() as an MCP tool (e.g., @mcp.tool()), but it’s less ideal for this use case:

Tool Overhead: Tools are for actions and require the client (e.g., LLM) to decide to invoke them, pass parameters (even if none are needed here), and wait for the result. This adds unnecessary steps for simple data access.

Resource Simplicity: Resources are read-only and can be directly referenced in prompts or auto-injected into the LLM’s context, making them more efficient for data like a symbol list.

Client Expectation: In MCP, clients expect resources for data and tools for actions. Exposing get_available_symbols as a tool might confuse clients expecting a resource for a list.

Example Scenario to Illustrate the Difference

Let’s say an LLM wants to get the price of a valid Binance symbol. Here’s how it works with and without the resource:

With Resource (data://available-symbols):

The LLM’s prompt says: “Use data://available-symbols to pick a valid symbol, then get its price.”

The MCP server injects the symbol list (e.g., ["BTCUSDT", "ETHUSDT", ...]) into the LLM’s context automatically when data://available-symbols is referenced.

The LLM sees BTCUSDT is valid and calls the get_price("BTCUSDT") tool.

Benefits: The LLM gets the symbol list without extra steps, avoids invalid inputs (e.g., get_price("INVALID")), and saves tokens by not invoking a tool for the list.

With Only a Function or Tool:

You’d need to expose get_available_symbols() as a tool (e.g., @mcp.tool()).

The LLM would need to:

Decide to call the get_available_symbols tool.

Wait for the server to run it and return the list.

Process the list and then call get_price("BTCUSDT").

Drawbacks: Extra steps (tool invocation), more tokens used in the LLM’s conversation, and higher chance of errors if the LLM doesn’t call the tool first or misinterprets the list.

Direct Function Call:

If you call get_available_symbols() locally in a script, it works fine for you, but:

The LLM (running remotely) can’t access it unless you manually send the data to the LLM’s environment.

You lose the benefits of the MCP server, which is designed to handle remote requests and integrate with clients.

Drawbacks: Not scalable for remote clients, no discoverability, and no integration with MCP’s resource system.

When Would You Call the Function Directly?

You’d call get_available_symbols() directly if:

You’re building a local script, not a server, and don’t need remote access.

You’re testing or debugging the function locally.

You don’t need MCP’s client-server architecture (e.g., no LLM or external clients).

However, since your code uses FastMCP and runs mcp.run(), it’s designed as a server for remote clients, so the resource approach is more appropriate.

Simple Summary

Why Use the Resource? The data://available-symbols resource makes the symbol list accessible to remote clients (like LLMs) over the MCP protocol. It’s efficient (no tool invocation), discoverable (via metadata), and fits MCP’s design for read-only data.

Why Not Just Call the Function? Direct function calls work locally but don’t work for remote clients. The MCP resource lets clients like LLMs access the data seamlessly, reducing errors and improving efficiency in a client-server setup.

Practical Benefit: The resource ensures an LLM can check valid symbols (e.g., BTCUSDT) before calling tools like get_price, making interactions faster and more reliable.

I’m also pasting the full working code with the MCP resource Implementation

Sample Code 1: With MCP Resource Implementation - Function acting as a local resource

# Official Python MCP implementation

# Abstracts away many complexities from the MCP-based protocol

from mcp.server.fastmcp import FastMCP

import requests

from typing import Any

# Note: urllib3.response import is unused; consider removing it

from urllib3 import response

mcp = FastMCP("Binance MCP")

@mcp.tool()

def get_price(symbol: str) -> Any:

"""

Get the current price of a crypto asset from Binance

Args:

symbol (str): The symbol of the crypto asset to get the price of

Returns:

Any: The current price of the crypto asset

"""

symbol = get_symbol_from_name(symbol)

url = f"https://api.binance.com/api/v3/ticker/price?symbol={symbol}"

response = requests.get(url)

response.raise_for_status()

return response.json()

@mcp.tool()

def get_price_change(symbol: str) -> Any:

"""

Get the last 24 hours price change of a crypto asset from Binance

Args:

symbol (str): The symbol of the crypto asset

Returns:

Any: The 24-hour price change data

"""

symbol = get_symbol_from_name(symbol)

url = f"https://data-api.binance.vision/api/v3/ticker/24hr?symbol={symbol}"

response = requests.get(url)

response.raise_for_status()

return response.json()

# Helper function (unchanged)

def get_symbol_from_name(name: str) -> str:

if name.lower() in ["bitcoin", "btc"]:

return "BTCUSDT"

elif name.lower() in ["ethereum", "eth"]:

return "ETHUSDT"

else:

return name.upper()

# Resource section: Removed 'annotations' to fix TypeError

@mcp.resource(

uri="data://available-symbols",

name="Available Trading Symbols",

description="Provides a list of active trading symbols available on Binance.",

mime_type="application/json"

)

def get_available_symbols() -> list:

"""

Fetches and returns a list of active trading symbols from Binance.

Returns:

list: A list of strings representing active symbols (e.g., ['BTCUSDT', 'ETHUSDT']).

"""

url = "https://api.binance.com/api/v3/exchangeInfo"

response = requests.get(url)

response.raise_for_status()

data = response.json()

# Filter for active symbols (status == 'TRADING')

symbols = [s['symbol'] for s in data['symbols'] if s['status'] == 'TRADING']

return symbols

if name == "__main__":

mcp.run()

Sample Code 2: With MCP Resource Implementation (Local CSV File acting as the resource)

# Official Python MCP implementation

# Abstracts away many complexities from the MCP-based protocol

from mcp.server.fastmcp import FastMCP

import requests

from typing import Any

import csv

# Note: urllib3.response import is unused; consider removing it

from urllib3 import response

mcp = FastMCP("Binance MCP")

@mcp.tool()

def get_price(symbol: str) -> Any:

"""

Get the current price of a crypto asset from Binance

Args:

symbol (str): The symbol of the crypto asset to get the price of (e.g., BTCUSDT)

Returns:

Any: The current price of the crypto asset

"""

# Note: MCP Client should use the symbol-mapping resource to validate/convert symbol

url = f"https://api.binance.com/api/v3/ticker/price?symbol={symbol}"

response = requests.get(url)

response.raise_for_status()

return response.json()

@mcp.tool()

def get_price_change(symbol: str) -> Any:

"""

Get the last 24 hours price change of a crypto asset from Binance

Args:

symbol (str): The symbol of the crypto asset (e.g., BTCUSDT)

Returns:

Any: The 24-hour price change data

"""

# Note: MCP Client should use the symbol-mapping resource to validate/convert symbol

url = f"https://data-api.binance.vision/api/v3/ticker/24hr?symbol={symbol}"

response = requests.get(url)

response.raise_for_status()

return response.json()

# New resource: Reads symbols from a CSV file

@mcp.resource(

uri="data://crypto-symbols",

name="Crypto Symbols from CSV",

description="Provides a list of crypto trading symbols from a local CSV file.",

mime_type="application/json"

)

def get_crypto_symbols() -> list:

"""

Reads a list of crypto trading symbols from a CSV file.

Returns:

list: A list of strings representing trading symbols (e.g., ['BTCUSDT', 'ETHUSDT']).

"""

file_path = r"C:\Users\Skugan\Desktop\github-cursor\mcp-course\crypto.csv"

symbols = []

try:

with open(file_path, mode='r', encoding='utf-8') as file:

reader = csv.DictReader(file)

for row in reader:

if 'symbol' in row:

symbols.append(row['symbol'])

except FileNotFoundError:

raise Exception(f"CSV file not found at {file_path}")

except Exception as e:

raise Exception(f"Error reading CSV file: {str(e)}")

return symbols

# New resource: Provides symbol mapping

@mcp.resource(

uri="data://symbol-mapping",

name="Symbol Mapping",

description="Provides a mapping of crypto names/aliases to Binance trading symbols.",

mime_type="application/json"

)

def get_symbol_mapping() -> dict:

"""

Returns a mapping of crypto names/aliases to Binance trading symbols.

Returns:

dict: A dictionary mapping names to symbols (e.g., {'bitcoin': 'BTCUSDT', 'eth': 'ETHUSDT'}).

"""

return {

"bitcoin": "BTCUSDT",

"btc": "BTCUSDT",

"ethereum": "ETHUSDT",

"eth": "ETHUSDT"

}

if name == "__main__":

mcp.run()